Exploring the new safety features of ChatGPT 5

How OpenAI’s latest safeguards make AI more transparent, trustworthy, and safe to use in your daily work

As someone who strongly believes in the importance of safety when using artificial intelligence, I want to share why I think the new safeguards in ChatGPT 5 are such a big deal. AI tools are becoming part of our everyday lives — in business, education, creative work, and healthcare — and it’s essential that they are not just powerful, but also trustworthy and safe.

With the launch of ChatGPT 5, we’re seeing some of the most significant safety improvements yet. These updates make AI support far more transparent, responsible, and reliable. Here’s my take on the changes, how they work, and why they matter more now than ever.

Why safety matters more than ever

Before ChatGPT 5, you might have run into a frustrating AI “wall.” The model could refuse to answer a question (“Sorry, I can’t help with that”), give a vague response, or — more worrying — produce inaccurate or risky information.

These weren’t rare glitches. Businesses worried about confidential data leaks, teachers saw unpredictable responses, and healthcare professionals knew not to trust medical advice from a chatbot.

The new safeguards in ChatGPT 5 tackle these issues head-on, building trust and making safety central to every interaction.

From hard refusals to safe completions

Older AI models often shut down anything that looked risky or controversial:

“I’m sorry, I can’t assist with that.”

While well-intentioned, this blunt approach left users without clear guidance.

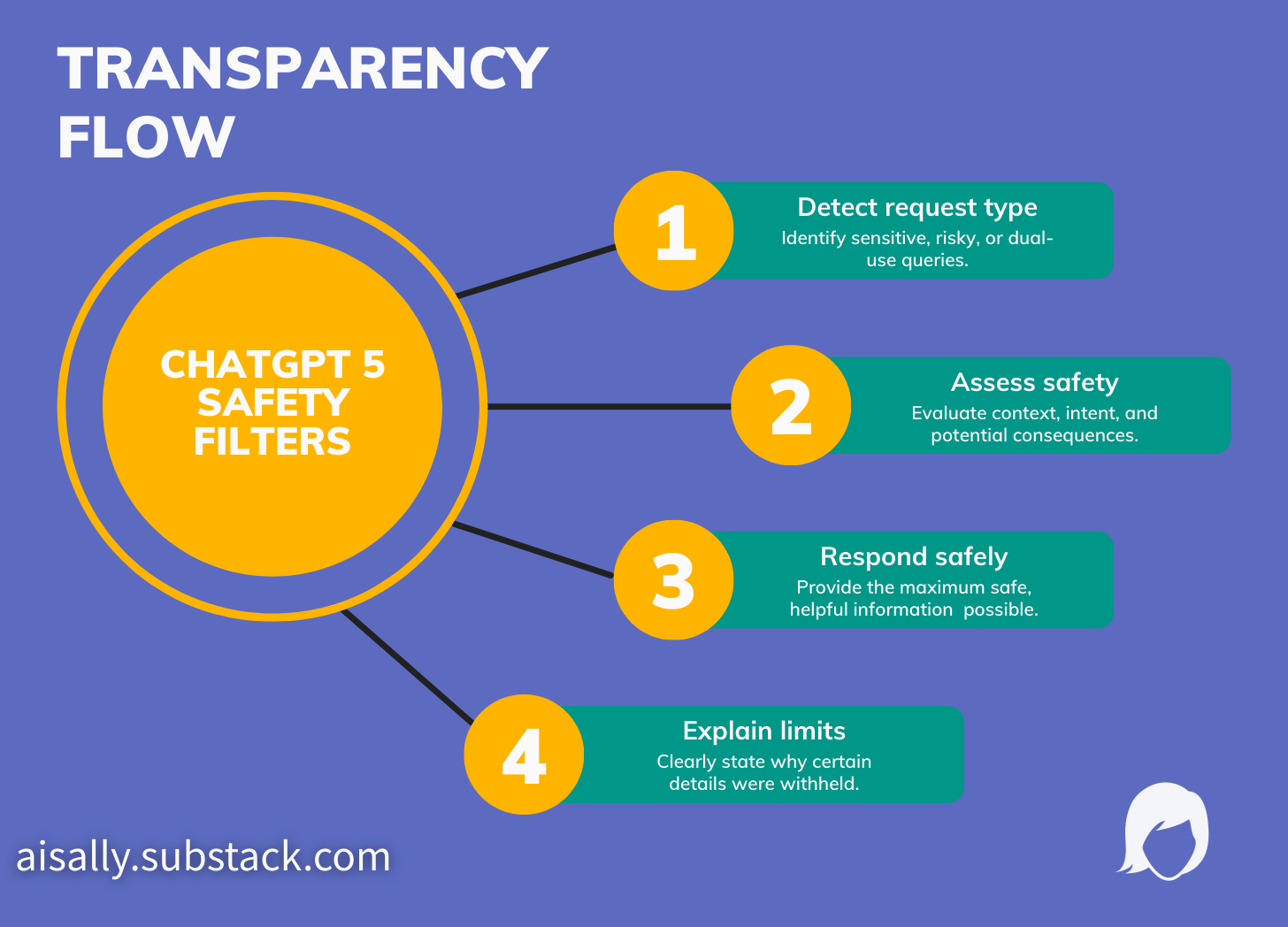

ChatGPT 5’s safe completions system takes a more nuanced route. Instead of simply refusing, it now:

Gives as much safe, helpful information as possible.

Explains why it can’t provide certain details.

Suggests safer alternatives or thoughtful redirections.

Business scenario

Erica, a manager, asks for tips on strengthening cybersecurity at her company. If she requests something risky (e.g., “How can I bypass security protocols?”), ChatGPT 5 recognises the boundary, provides high-level best practices only, and states:“I can’t advise on bypassing security systems, as this poses ethical and legal risks. Here are some approved methods for securing data instead…”

Education scenario

Mr Li, a science teacher, is looking for hands-on experiments for Year 8 students. ChatGPT 5 readily suggests safe, age-appropriate activities but stops short of anything hazardous, always flagging any safety concerns and explaining them:“This experiment should only be performed with adult supervision. For younger students, try this alternative using household items…”

This move from blanket refusals to nuanced, guided replies lifts the veil on how AI models work, improving usefulness and user confidence.

Reduced hallucinations and false confidence

In past versions, AI “hallucinations” — confident but wrong answers — could creep into business reports, lesson plans, or healthcare resources.

ChatGPT 5 has halved these error rates (from 4.8% to 2.1% in testing) and now leans on transparency:

Admitting when it lacks reliable information.

Citing reputable sources where possible.

Encouraging professional verification for sensitive topics.

Healthcare scenario

Dr. Singh, a practice manager, asks for information about rare medical symptoms. ChatGPT 5 now readily admits when it lacks data, provides source references, and most importantly urges consulting a real medical professional.“I’m not a qualified healthcare provider. For this rare condition, please speak directly to a specialist. Here are some general resources from reputable medical organisations…”

As someone who has worked in healthcare, I can’t overstate how much this transparency matters — especially in contexts where lives and wellbeing are at stake.

Handling dual-use and sensitive topics responsibly

Many AI queries can be perfectly harmless—or potentially risky. For example, a chemistry teacher asking about reactions in the classroom, a novelist plotting a suspenseful crime story, or a tech consultant needing to understand cybersecurity threats.

ChatGPT 5 now uses context-sensitive controls

It assesses not just the wording but the intent and possible consequences. Where a request falls into “dual-use” (could be safe or dangerous), the AI splits the difference: answering the harmless part and carefully refusing the rest—always with clear explanation.

Creative/health scenario

Lee, a health writer, wants ideas for a novel where a character fakes illness. ChatGPT 5 helps with emotional depth and plot development but won’t detail unethical or unsafe methods, explaining the boundary clearly.

Education scenario

Ms. Woods, a chemistry teacher, asks for a demonstration suitable for her class. ChatGPT 5 declines to suggest anything involving hazardous chemicals, instead proposing engaging, fully-safe alternatives and explaining the precautions.

It’s not just about compliance—the model’s responses are rooted in practical safety, ethical standards, and common sense.

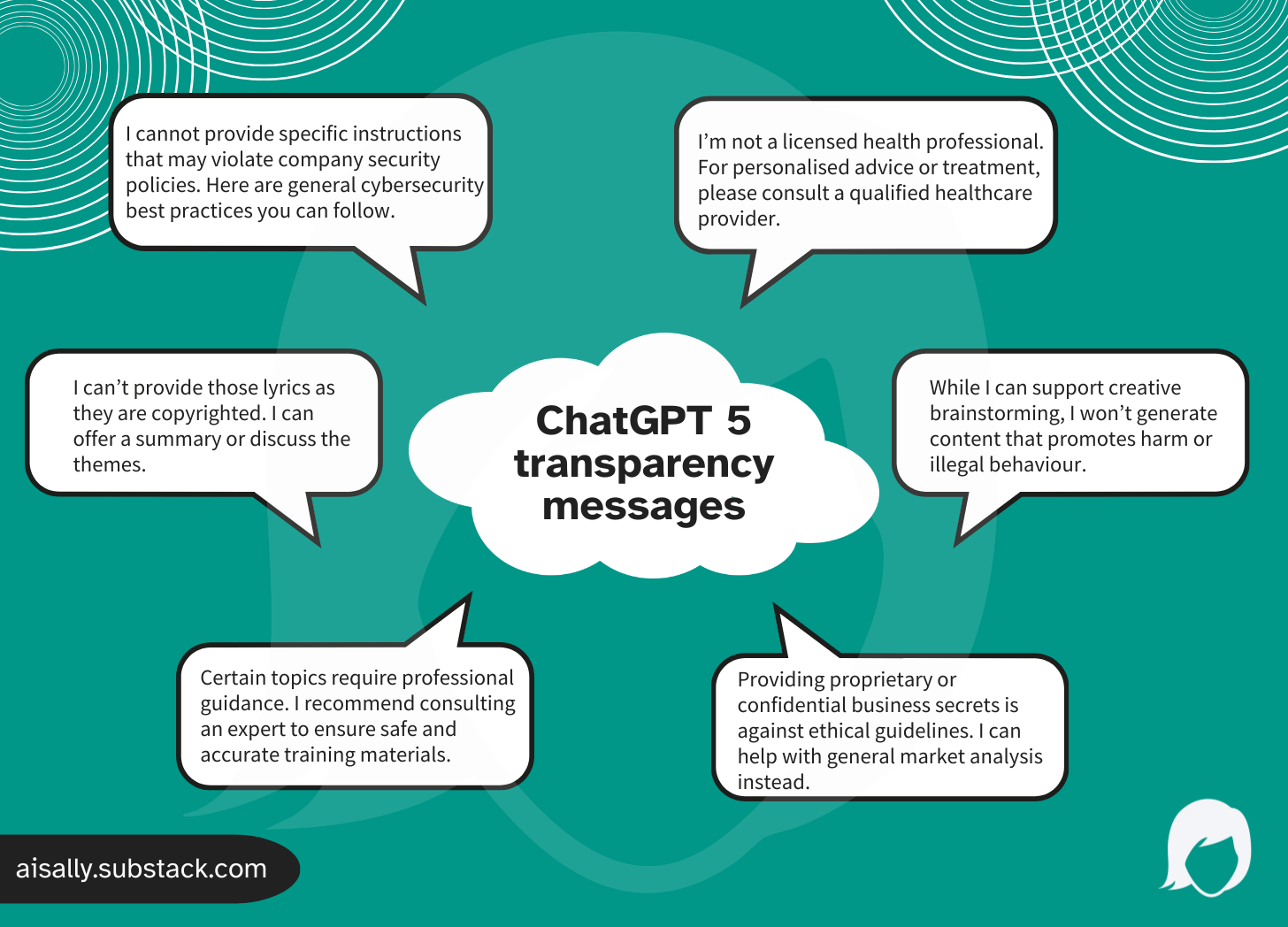

Transparency and user guidance you can act on

One of my favourite improvements is how ChatGPT 5 now communicates its limits. Instead of vague refusals, you’ll see:

“I can’t provide that information because it could pose safety risks. Here’s a safe alternative…”

or

“For detailed advice on prescription medicines, please consult a licensed healthcare professional.”

Rather than hiding behind vague refusals, the AI explains itself, which empowers users to make informed decisions and adjust their requests.

Business scenario

A business consultant asks how to reverse-engineer a competitor’s software. ChatGPT 5 says:“I’m sorry, I can’t help with reverse-engineering proprietary software due to legal and ethical considerations. Would you like general advice on competitive analysis?”

This transparency builds trust and educates users on where healthy boundaries lie.

Built-in security and behind-the-scenes safeguards

Alongside conversational improvements, ChatGPT 5 now includes technical measures that operate behind the scenes:

Multi-layered content monitoring for unsafe prompts.

External audits and “red teaming”, where independent experts test the system, looking for loopholes and vulnerabilities.

Resistance to prompt injection attacks.

Advanced encryption and secure single sign-on for business use.

Real-world safety testing in healthcare, education, and business environments.

These protections are designed to be invisible during everyday use but give you extra peace of mind.

My take and a final word on responsible use

ChatGPT 5’s safety upgrades make it easier to trust the tool, but safety isn’t automatic. The best results still come from thoughtful, informed use. Here are some practical ways to keep things safe:

Always review critical outputs, especially in health, education or law.

Look for—and value—AI transparency. If ChatGPT 5 explains its boundary, take careful note.

Report anything that feels off. Most platforms offer quick feedback tools, improving safety for all users.

Remember: AI is an assistant, not a replacement for human judgment. AI supports but should not replace professional judgement where outcomes matter.

As someone who works with AI daily, I can say these changes genuinely make the technology feel more supportive and less risky to use. I hope this breakdown helps you feel confident about what’s possible when AI is designed with safety at its core.

In the next post in this series, we’ll explore ChatGPT 5: free vs paid service — what’s different? so you can decide which is right for you.

🔗 ChatGPT 5 series: catch up on all articles

I’m exploring ChatGPT 5 in depth in this special AI Sally series. Here’s where you can dive in:

Overview → ChatGPT 5 has arrived: what you need to know

New capabilities → ChatGPT 5 new features: smarter reasoning, longer context, and a creativity boost

Safety features → You’re reading it now!

Free vs paid → Free vs paid – what’s different (and how to choose the right version for you)

Open vs closed models → Open vs closed AI models – what does it mean and why does it matter?

💡 Each article breaks down complex AI topics in plain language, with examples for business, education, healthcare, and creative work—so you can feel confident using AI in ways that truly help.